Graphical User Interfaces. Humans love them and use them for everything, from phones, tablets, web pages, to desktop operating systems and Factory Control systems even watches! But are the days numbered for GUI’s?

I am going to go out on a limb and say that for the near future, we are still going to need displays. The eyes have a massive bandwidth compared to the ears and can take in a lot more information, much quicker than audio, however humans can think and speak with a much higher bandwidth that the current input devices we use, the problem is that a finger, or even that damn mouse is so useful at pointing at things we cannot describe.

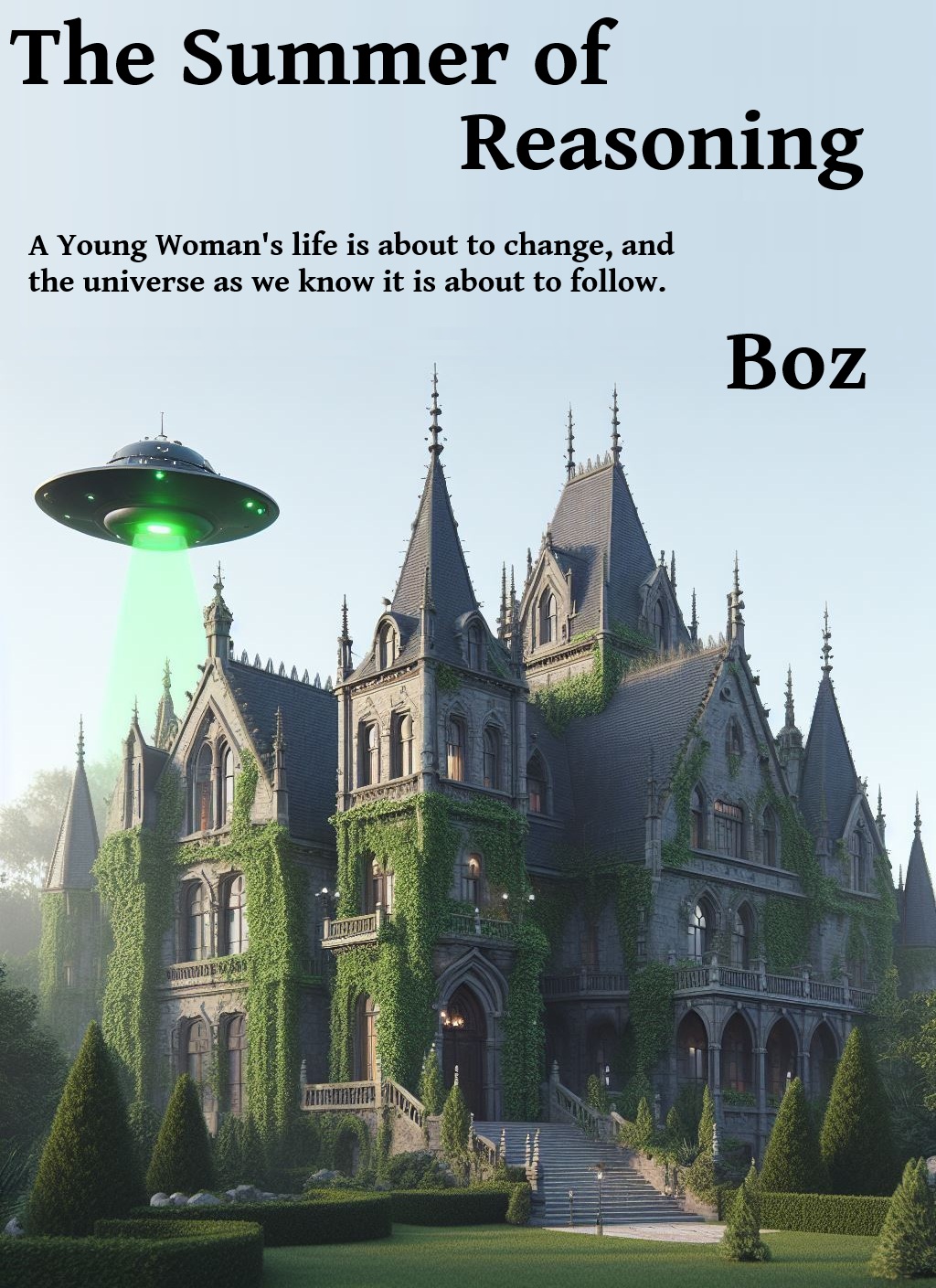

Perhaps Not The Best Example!

Windows 8 was not the most startling example of a good GUI design, having massive icons and 200 point fonts animating and shuffling about on my 30 inch monitor was not the experience I was hoping for after the perfectly usable desktop that was Windows 7. So why did Microsoft change a perfectly workable desktop? and why are we constantly re-inventing the GUI?

More importantly why, even when there are a perfectly good solid set of guidelines for the designer do we insist on ignoring them and rolling our own? Are we really that shallow that we have to keep the graphic designers in a job?

What the hell are these two icons going to mean to somebody born in the year 2025? I have no idea!

More importantly will we still need them when those people can just say “Order Me A Pizza.” and the AI replies, “Sure, Veggie Delight, or the Meat Special..” using their much more advanced AI’s.

Yet if you have ever tried to use voice software for anything remotely realistic recently it still just feels wrong; talking to your car, home assistant or phone, even when nobody else is listening, you generally still feel a bit stupid, with people watching that multiplies by ten!

The current AI models have nailed a lot of the accuracy, and even with my stupid Manchester accent they now seem to translate just about everything I say right, yet none of this wonderful technology has yet fed through to my Google Home thing, my iPhone or my Model 3. It is much easier to ask the wife to change the car stereo to play the MP3’s on my car than ask the Tesla AI to do it, eventually I googled the correct words to do this and “Switch To USB” is what you have to say in case you’re interested.

I doubt I could ever say “Move the cursor to the paragraph above where it says windows 7 and change it to windows 8” quicker than I could do it with my mouse and keyboard, and I would need to be a masochist to want to work that way. What about even more complicated work like designing a circuit board or a machine part using CAD, could you ever achieve the same throughput with voice? Yet a lot of people are now doing it with images and videos (guilty as charged!)

So maybe not spoken voice, but what about your internal voice? (that monolog now going on in the front of your mind as you read this sentence) That can probably do it because as you position the mouse, hold the left button down and move the mouse or type on the keyboard this voice has already instructed your hands to do it; So maybe that is the part of the brain we need to tap into!

I know that when I sit behind a good CAD engineer or artist I can easily describe the widget I want making or the change I want even by voice, but there I am looking over his or her shoulder at the screen while I am instructing them. Will we ever get to that stage with AI CAD? well I guess we will at some point, or maybe by that point the AI will just know what we want and design it!

Regardless of how well voice control works we are still going to need visual and audible feedback and there is still a lot to explore in this space. VR, AR, (XR!) are only the beginning,